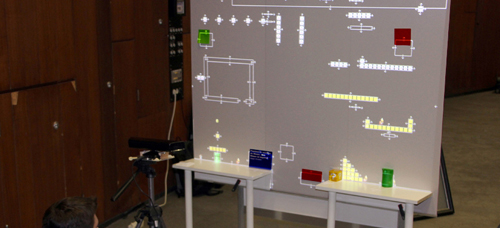

The screen, in any variation (flat, portable, touch sensitive or projected), is still the place where videogame interaction happens. The world we explore in a game is traditionally a virtual one, which is trapped inside the screen or projection. While the ways of interaction with a character/avatar or stage has improved over the years, the rare level-editors are still limited in their functions, they are still mostly drag and drop and the user is again limited to the game engine and the content already built. We propose to bring the game out of the screen, reducing the gap between creating and playing, and thus connecting the virtual and real world together in one single spatial augmented reality. We do this by including the user’s surrounding physical environment into the virtual world he is playing with. Furthermore we want to encourage gamers’ creativity by making it possible to create level content with almost every single physical object, adding augmented virtual content to the stage. By placing a red object into the screen, for example, our engine is detecting it as a fireball producing an object that is able to eliminate our character. We scan the surrounding in real time, which means users are able to build and readjust their stages while playing with it. With this approach we want to rediscover the concept of augmented reality, allowing us to play in our own environment: our room, our office or any wall where we can stick and place objects (posters, notes, etc.): just post it and play it! We consider that this can push the idea of Level Design to a new level. Also changing the way people play together as a team or as adversaries.

|

Opening Keynote Chris Harrison The Rich-Touch Revolution is Coming |

|

Closing Keynote Eric Paulos Hybrid Ecologies: New Stratagems for Computing Culture |